SIGGRAPH 2021 WAS HELD VIRTUALLY THIS YEAR from 9 -13 August. This year the virtual experience also has an On-Demand component allowing attendees to access video recordings from sessions, and vendor exhibitor videos, through 29 October 2021. Virtual SIGGRAPH was a little hard to navigate when everything is put under the same “Sessions” tab from White Papers, Classes to Vendor talks, and Production Sessions.

It was good to see vendors like Google, Amazon, Microsoft, and Facebook participate in sessions to add their expertise along with motion picture and visual effects professionals as content pipelines are changing. Autodesk still hosts its Vision series of presentations. Side Effects returns to do their Hive presentations and classes to illustrate features in Houdini.

Metaverse, virtual production, and the cloud are discussed a lot in presentations. We have a lot to cover so this year Architosh’s Siggraph virtual show floor coverage comes in Part 1 and Part 2—plus other news and special reports—from this day into next week. Be sure to stop by Architosh frequently to catch our latest in-depth information.

The Metaverse

The Metaverse was initially conceived as a dystopian future where virtual universes provided an escape from the dark, dreary worlds of crumbling societies. The word “metaverse” was first used in the 1992 cyberpunk novel Snow Crash. Hiro Protagonist, a hacker and pizza delivery guy, uses the Metaverse to escape his life in the book. He lives in a virtual world inside a 20 by 30-foot storage container in a world with a government corrupted by corporations. You ask, how does this affect me as an architect working in CAD?

Modern tech companies have devoted a ton of financial resources in creating and investing in the future of the Metaverse. NVIDIA had a video demonstrating Omniverse with four artists working together in their “Architecture Demo with Live Sync on Omniverse.” In this demo, NVIDIA had four artists connect in the Omniverse by the Nucleus Server. The four artists were using Revit, 3DS Max, Substance Painter, and Omniverse View. The four artists worked simultaneously together on the same project at the same time. All done interactively in real-time using NVIDIA’s RTX technology without ever having to do a test render.

In this video about the making of the NVIDIA GTC keynote one gets a good overall look at what at the chipmaker’s process. We have more videos below from them (and images too!)

NVIDIA, Epic Games, Autodesk had sessions discussing the Metaverse and how it would change the way we connect in our lives, not just for fun but also for working together. Advocates for the Metaverse see the implication of a growing digital economy on the web and the ever-increasing benefits for how humans interact using technology. Companies also expressed concerns about how the Metaverse could be abused by people like the issues on social media. A couple of sessions discussed the need to set standards so that the software to create these digital worlds could work seamlessly without interruption to the user.

In a call with analysts, CEO Mark Zuckerberg of Facebook discussed his company’s quarterly results while putting much of the focus on “The Metaverse.” He explained his vision of Facebook turning into a Metaverse Company. A couple of weeks ago, Microsoft CEO Satya Nadella said his company is building an “enterprise metaverse.” In April, Epic Games announced a billion-dollar funding round to support its metaverse ambitions, pushing the Fortnite maker’s valuation to nearly $30 billion. People are already connected to metaverses in games like Fortnite and Roblox. Architectural firms use Omniverse and Unreal Engine in real-time scenarios working on large-scale projects with teams from other locations.

Global Production

Global Production kicked into high gear with the pandemic. The shift to the cloud will stay with us as cloud solutions increase with remote working. Companies want to have access to more creative talent worldwide through remote working expanding their talent pool. AWS delivers cloud solutions that allow artists in different locations to connect via the cloud to a single pipeline. Software developers created applications accessed through the cloud creating solutions without having to install anything on your workstation. Rendering and storage were some of the first things to migrate to the cloud.

Netflix has 36 productions using global production technologies to enable access to more creatives around the world. Cloud technologies allow companies like Netflix to have a pipeline on the cloud that creatives can access no matter where they are in the world. Large architectural firms are exploring using Global Production for projects to expand their talent pool while simultaneously reconfiguring or eliminating their offices.

Virtual Production

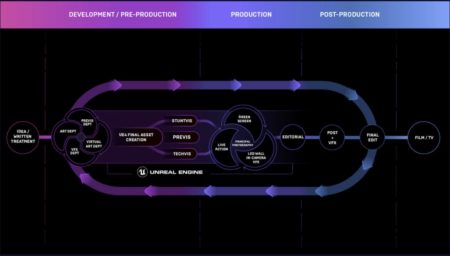

The Mandalorian is one of the projects receiving huge attention for its work using Virtual Production. Virtual production uses a suite of software packages to mix live-action footage with computer graphics in real-time. Filmmakers and other creatives working in the pipeline across multiple locations can deliver feedback across digital and physical environments where actors are physically working on the set. Unreal Engine by Epic Games is at the forefront of this technology, leading creatives on the cutting edge as the global environment changes in every aspect of production. Epic Games’ partners in virtual production include Digital Domain, ILM Lab, FOX VFX Lab, Halon Entertainment, Method Studios, and NVIDIA.

MORE: The Future of Filmmaking is here

SIGGRAPH 2021 didn’t have many new announcements, so we will talk about what they presented at SIGGRAPH this year.

NVIDIA

NVIDIA is one of the leaders in the world of Metaverse technologies. Over 500 companies are evaluating Omniverse.

NVIDIA announced on 10 August 2021 the expansion of the NVIDIA Omniverse platform. It is the world’s first real-time simulation collaborative platform delivering a foundation for the Metaverse. Blender and Adobe will open up Omniverse to millions of users, creating artists’ ability to collaborate in these packages interactively.

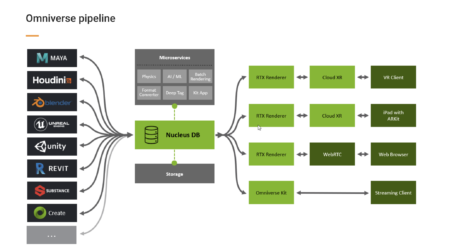

NVIDIA Omniverse is a technology platform leveraging a next-gen file format invented at Pixar and shared with the industry, plus the convergence of emergent technologies in cloud computing and hardware-accelerated real-time raytracing hardware combined with openly shared APIs for developers to plug into.

Omniverse gives artists a way to work together in real-time across different software applications in a shared virtual world. A key to Omniverse’s early adoption is Pixar’s open-sourced USD file format—the foundation of Omniverse’s collaboration and simulation platform. USD is being called the “HTML of the Metaverse.” Omniverse has a quickly growing ecosystem for supporting partners. Some of the early adopters are SHoP Architects in NYC, South Park, and Lockheed Martin.

A chart showing the technical formation of the Omniverse platform as a technology pipeline for production. (click to enlarge)

“The ability to make decisions quickly and accurately across our interdisciplinary team is core to SHoP’s process,” Geof Bell, Associate, Digital Design and Delivery at SHoP Architects. “Omniverse will enable us to bring data from multiple experts together in a single authoritative way, curating it to present the right information at the right time, paired with high-quality visuals for intelligent, evocative design.”

Apple, Pixar, and NVIDIA have collaborated to bring advanced physics to USD embracing the open standard to give 3D pipelines to billions of devices. Blender and NVIDIA have teamed on bringing USD support to the upcoming release of Blender 3.0. Adobe offers artists a way to work in real-time using the Substance Designer Collection of tools in the Omniverse.

3D research from NVIDIA Toronto unveiled GANverse3D Image2Car extension for Omniverse Create shown at SIGGRAPH 2021, and updates for the Kaolin library and Omniverse Kaolin App. NVIDIA research also announced research was addressing two of the most challenging problems in graphics—real-time path tracing and content creation using neural radiance cashing for global illumination.

Millions of people will work and play in the Metaverse, living through ground-breaking visual experiences that blur reality and virtual reality. What makes so much of this possible is the ability to render to screens life-like realism in real-time and to virtual headsets that provide stereoscopic 1:1 scale virtual experiences.

This method uses NVIDIA RT Cores for ray tracing and Tensor cores for AI acceleration. The neural network can adapt and learn how the light is distributed through the scene. NVIDIA research addresses texture difficulty to efficiently store and render, for example, the texture of a carpet or blades of grass through NeRF-Tex. NeRF-Tex approaches the use of neural networks to represent these challenging materials and encode how they respond to lighting. NVIDIA researchers have also developed a new approach to seeing the same geometry close-up or from a distance keeping the look realistic with the “inverse rendering method.” Creators can generate simpler models that are optimized to appear indistinguishable from the originals with drastic reductions in their geometric complexity.

NVIDIA also showed a documentary, “Connecting in the Metaverse: The Making of the GTC Keynote.” NVIDIA used the Omniverse to create the GTC Keynote. They made 21 versions for CEO Jensen Huang. The amount of technology NVIDIA used to develop his kitchen, which was 6000+ objects and hundreds of thousands of polygons, demonstrates the power of RTX rendering in the Omniverse.

All its amazing work in Omniverse leverages NVIDIA RTX technologies. Watch this special Siggraph 2021 presentation by Richard Kerris (formerly of Apple and Lucasfilm) and Sanja Fidler, both of NVIDIA.

The kitchen had thousands of photographs that were put through a photogrammetry program to create a photorealistic kitchen. Jensen was scanned in a truck that came to his house to get an accurate model while adapting minimal data to animate him. Facial animation was driven by audio to gesture tools, and his body was animated with motion capture from an actor who imitates Jensen’s moves from past keynotes for these sessions. Also shown was how BMW used Omniverse to create and configure a vast factory and Drive Sim using Render RTX to display real-time driving in a Mercedes after building realistic backgrounds using point cloud data imported into Omniverse. NVIDIA also spoke about the latest version of PhysX delivering true Physics in simulations in Omniverse.

Omniverse is changing the way we work, create, and socialize. I can’t wait to see how architects use this technology in the future.

NVIDIA GPUs

The NVIDIA RTX A2000 was released during this SIGGRAPH, an inexpensive, low-cost entry-level solution for workstations delivering RTX for artists and other content creators.

At 495 USD estimated retail value, the NVIDIA RTX A2000 has boasts:

- 13.25 million transistors

- 26 RT (ray tracing) cores

- 104 Tensor cores

- 3328 CUDA cores

- 8 TF (32-bit / single-precision)

- 15.6 TF for RT core performance

- 63.9 TF for Tensor core performance

More details and more pictures can be found at Architosh’s specific news report here.

Hewlett-Packard

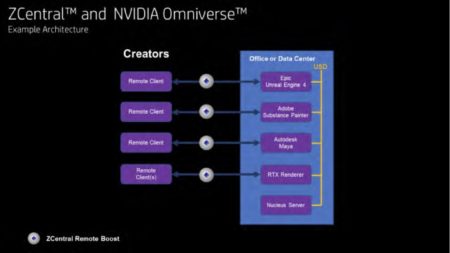

HP showed off their Z solution in their workstations powered by NVIDIA RTX technology with ZCentral Remote Boost, a remote calibration software that just won HP an Emmy. HP did a white paper on HP ZCentral and NVIDIA Omniverse collaboration. ZCentral is a solution that allows creatives to connect to the powerful Z Workstations by HP, remotely and securely.

The white paper describes HP ZCentral and Omniverse concepts, applications, and workflows supported—typical HP ZCentral based configurations and details for setting up Omniverse deployment. The intended audience is creatives, studios, and IT professionals.

You can download the Omniverse Beta here.

Epic Games

Epic Games announced billion-dollar funding round for the Metaverse in April. Content will be a driving force in creating these worlds, and Unreal Engine will be one of the leaders in developing this technology. Epic Games’ Marc Petit VP & General Manager and Patrick Cozzi CEO at Cesium co-hosted a Birds of a Feather session, “Building the Open Metaverse,” featuring technical presenters from various organizations. They discussed their hopes for standards in the Metaverse and want people to support a safe space.

What is Virtual Production? Epic layouts it out around their Unreal Engine technology in this diagram.

Epic Games gave us a preview of the upcoming release of Unreal Engine 4.27 for virtual production. VFX takes a giant leap forward in 4.27. New features will improve the ease of use, efficiency, and quality expected by filmmakers that involve game-changing techniques that are now more accessible and practical than ever. Unreal 5.0—still a bit out on the horizon—is in pre-release with limited features.

Epic Games also announced that Ariana Grande is stepping into the Metaverse as the headliner for the Fortnite Rift Tour.

Epic Games discussed the new OpenColorIO v2 for color management, a key in modern VFX, animation, gaming, and architectural software.

Amazon

Amazon Web Services presented sessions “Through the Looking Glass: The Next Reality of Content Production” and “The Future of Visual Effects.”

MORE: Varjo Unveils Reality Cloud—Groundbreaking Virtual Teleportation

Jen Dahlman from Amazon spoke about how the AWS internal teams of creatives worked from home to work on the animated short “Spanner” using the cloud. AWS was one of the early cloud solutions providers. VFX companies initially used the cloud for rendering and storage solutions, but access to applications through the cloud has increased due to demand during the pandemic. Software developers had to find a new way to deliver their applications via the cloud so creatives could use those tools in production.

Remote workforces are now a core part of Global Production and how the future of film and television production will change. Amazon talked about how cloud technology is helping professionals in the creative industry worldwide deal with today’s challenges and meet the increased demand for high fidelity content. Netflix has 36 productions currently using the cloud for Global Production. Netflix will increase Global Production for all their future projects.

Reader Comments

Comments for this story are closed